Renew DOP-C01 Test Preparation For AWS Certified DevOps Engineer- Professional Certification

It is impossible to pass Amazon-Web-Services DOP-C01 exam without any help in the short term. Come to Certleader soon and find the most advanced, correct and guaranteed Amazon-Web-Services DOP-C01 practice questions. You will get a surprising result by our Rebirth AWS Certified DevOps Engineer- Professional practice guides.

Also have DOP-C01 free dumps questions for you:

NEW QUESTION 1

You have defined a Linux based instance stack in Opswork. You now want to attach a database to the Opswork stack. Which of the below is an important step to ensure that the application on the Linux instances can communicate with the database

- A. Addanother stack with the database layer and attach it to the application stack.

- B. ConfigureSSL so that the instance can communicate with the database

- C. Addthe appropriate driver packages to ensure the application can work with thedatabase

- D. Configuredatabase tags for the Opswork application layerOpswork application layer

Answer: C

Explanation:

The AWS documentation mentions the below point Important

For Linux stacks, if you want to associate an Amazon RDS service layer with your app, you must add the appropriate driver package to the associated app server layer,

as follows:

1. Click Layers in the navigation pane and open the app server's Recipes tab.

2. Click Edit and add the appropriate driver package to OS Packages. For example, you should specify mysql if the layer contains Amazon Linux instances and mysql-client if the layer contains Ubuntu instances.

3. Save the changes and redeploy the app.

For more information on Opswork app connectivity, please visit the below URL: http://docs.aws.amazon.com/opsworks/latest/userguide/workingapps-connectdb.htmI

NEW QUESTION 2

Your company is using an Autoscaling Group to scale out and scale in instances. There is an expectation of a peak in traffic every Monday at 8am. The traffic is then expected to come down before the weekend on Friday 5pm. How should you configure Autoscaling in this?

- A. Createdynamic scaling policies to scale up on Monday and scale down on Friday

- B. Create a scheduled policy to scale up on Fridayand scale down on Monday

- C. CreateascheduledpolicytoscaleuponMondayandscaledownonFriday

- D. Manuallyadd instances to the Autoscaling Group on Monday and remove them on Friday

Answer: C

Explanation:

The AWS Documentation mentions the following for Scheduled scaling

Scaling based on a schedule allows you to scale your application in response to predictable load changes. For example, every week the traffic to your web application starts to increase on Wednesday, remains high on Thursday, and starts to decrease on Friday. You can plan your scaling activities based on the predictable traffic patterns of your web application.

For more information on scheduled scaling for Autoscaling, please visit the below URL

• http://docs.aws.amazon.com/autoscaling/latest/userguide/sched ule_time.htm I

NEW QUESTION 3

You have launched a cloudformation template, but are receiving a failure notification after the template was launched. What is the default behavior of Cloudformation in such a case

- A. It will rollback all the resources that were created up to the failure point.

- B. It will keep all the resources that were created up to the failure point.

- C. It will prompt the user on whether to keep or terminate the already created resources

- D. It will continue with the creation of the next resource in the stack

Answer: A

Explanation:

The AWS Documentation mentions

AWS Cloud Formation ensures all stack resources are created or deleted as appropriate. Because AWS CloudFormation treats the stack resources as a single unit,

they must all be created or deleted successfully for the stack to be created or deleted. If a resource cannot be created, AWS CloudFormation rolls the stack back and automatically deletes any resources that were created.

For more information on Cloudformation, please refer to the below link: http://docs.aws.amazon.com/AWSCIoudFormation/latest/UserGuide/stacks.html

NEW QUESTION 4

As part of your deployment process, you are configuring your continuous integration (CI) system to build AMIs. You want to build them in an automated manner that is also cost-efficient. Which method should you use?

- A. Attachan Amazon EBS volume to your CI instance, build the root file system of yourimage on the volume, and use the Createlmage API call to create an AMI out ofthis volume.

- B. Havethe CI system launch a new instance, bootstrap the code and apps onto theinstance and create an AMI out of it.

- C. Uploadall contents of the image to Amazon S3 launch the base instance, download allof the contents from Amazon S3 and create the AMI.

- D. Havethe CI system launch a new spot instance bootstrap the code and apps onto theinstance and create an AMI out of it.

Answer: D

Explanation:

The AWS documentation mentions the following

If your organization uses Jenkins software in a CI/CD pipeline, you can add Automation as a post- build step to pre-install application releases into Amazon Machine Images (AMIs). You can also use the Jenkins scheduling feature to call Automation and create your own operating system (OS) patching cadence

For more information on Automation with Jenkins, please visit the link:

• http://docs.aws.a mazon.com/systems-manager/latest/userguide/automation-jenkinsJntm I

• https://wiki.jenkins.io/display/JCNKINS/Amazon < CC21 Plugin

NEW QUESTION 5

You have an application running on Amazon EC2 in an Auto Scaling group. Instances are being bootstrapped dynamically, and the bootstrapping takes over 15 minutes to complete. You find that instances are reported by Auto Scaling as being In Service before bootstrapping has completed. You are receiving application alarms related to new instances before they have completed bootstrapping, which is causing confusion. You find the cause: your application monitoring tool is polling the Auto Scaling Service API for instances that are In Service, and creating alarms for new previously unknown instances. Which of the following will ensure that new instances are not added to your application monitoring tool before bootstrapping is completed?

- A. Create an Auto Scaling group lifecycle hook to hold the instance in a pending: wait state until your bootstrapping is complet

- B. Once bootstrapping is complete, notify Auto Scaling to complete the lifecycle hook and move the instance into a pending:proceed state.

- C. Use the default Amazon Cloud Watch application metrics to monitor your application's healt

- D. Configure an Amazon SNS topic to send these Cloud Watch alarms to the correct recipients.

- E. Tag all instances on launch to identify that they are in a pending stat

- F. Change your application monitoring tool to look for this tag before adding new instances, and the use the Amazon API to set the instance state to 'pending' until bootstrapping is complete.

- G. Increase the desired number of instances in your Auto Scaling group configuration to reduce the time it takes to bootstrap future instances.

Answer: A

Explanation:

Auto Scaling lifecycle hooks enable you to perform custom actions as Auto Scaling launches or terminates instances. For example, you could install or configure

software on newly launched instances, or download log files from an instance before it terminates. After you add lifecycle hooks to your Auto Scaling group, they work as follows:

1. Auto Scaling responds to scale out events by launching instances and scale in events by terminating instances.

2. Auto Scaling puts the instance into a wait state (Pending:Wait orTerminating:Wait). The instance remains in this state until either you tell Auto Scaling to continue or the timeout period ends.

For more information on rolling updates, please visit the below link:

• http://docs.aws.amazon.com/autoscaling/latest/userguide/lifecycle-hooks.htm I

NEW QUESTION 6

Your application is having a very high traffic, so you have enabled autoscaling in multi availability zone to suffice the needs of your application but you observe that one of the availability zone is not receiving any traffic. What can be wrong here?

- A. Autoscaling only works for single availability zone

- B. Autoscaling can be enabled for multi AZ only in north Virginia region

- C. Availability zone is not added to Elastic load balancer

- D. Instances need to manually added to availability zone

Answer: C

Explanation:

When you add an Availability Zone to your load balancer. Elastic Load Balancing creates a load balancer node in the Availability Zone. Load balancer nodes accept traffic from clients and forward requests to the healthy registered instances in one or more Availability Zones.

For more information on adding AZ's to CLB, please refer to the below U RL:

http://docs.aws.amazon.com/elasticloadbalancing/latest/classic/enable-disable-az.htmI

NEW QUESTION 7

You currently have a set of instances running on your Opswork stacks. You need to install security updates on these servers. What does AWS recommend in terms of how the security updates should be deployed?

Choose 2 answers from the options given below.

- A. Createand start new instances to replace your current online instance

- B. Then deletethe current instances.

- C. Createa new Opswork stack with the new instances.

- D. OnLinux-based instances in Chef 11.10 or older stacks, run the UpdateDependencies stack command.

- E. Create a cloudformation template which can be used to replace the instances.

Answer: AC

Explanation:

The AWS Documentation mentions the following

By default, AWS OpsWorks Stacks automatically installs the latest updates during setup, after an instance finishes booting. AWS OpsWorks Stacks does not automatically install updates after an instance is online, to avoid interruptions such as restarting application servers. Instead, you manage updates to your online instances yourself, so you can minimize any disruptions.

We recommend that you use one of the following to update your online instances.

Create and start new instances to replace your current online instances. Then delete the current instances. The new instances will have the latest set of security patches installed during setup.

On Linux-based instances in Chef 11.10 or older stacks, run the Update Dependencies stack command, which installs the current set of security patches and other updates on the specified instances.

For more information on Opswork updates, please visit the below url • http://docs.aws.amazon.com/opsworks/latest/userguide/best-practices-updates. htmI

NEW QUESTION 8

You need the absolute highest possible network performance for a cluster computing application. You already selected homogeneous instance types supporting 10 gigabit enhanced networking, made sure that your workload was network bound, and put the instances in a placement group. What is the last optimization you can make?

- A. Use 9001 MTU instead of 1500 for Jumbo Frames, to raise packet body to packet overhead ratios.

- B. Segregate the instances into different peered VPCs while keeping them all in a placement group, so each one has its own Internet Gateway.

- C. Bake an AMI for the instances and relaunch, so the instances are fresh in the placement group and do not have noisy neighbors.

- D. Turn off SYN/ACK on your TCP stack or begin using UDP for higher throughput.

Answer: A

Explanation:

Jumbo frames allow more than 1500 bytes of data by increasing the payload size per packet, and thus increasing the percentage of the packet that is not packet

overhead. Fewer packets are needed to send the same amount of usable data. However, outside of a given AWS region (CC2-Classic), a single VPC, or a VPC peering

connection, you will experience a maximum path of 1500 MTU. VPN connections and traffic sent over an Internet gateway are limited to 1500 MTU. If packets are over

1500 bytes, they are fragmented, or they are dropped if the Don't Fragment flag is set in the IP header.

For more information on Jumbo Frames, please visit the below URL: http://docs.aws.amazon.com/AWSCC2/latest/UserGuide/network_mtu.htm#jumbo_frame_instance s

NEW QUESTION 9

Which of the following service can be used to provision ECS Cluster containing following components in an automated way:

1) Application Load Balancer for distributing traffic among various task instances running in EC2 Instances

2) Single task instance on each EC2 running as part of auto scaling group

3) Ability to support various types of deployment strategies

- A. SAM

- B. Opswork

- C. Elastic beanstalk

- D. CodeCommit

Answer: C

Explanation:

You can create docker environments that support multiple containers per Amazon CC2 instance with multi-container Docker platform for Elastic Beanstalk-Elastic Beanstalk uses Amazon Elastic Container Service (Amazon CCS) to coordinate container deployments to multi-container Docker environments. Amazon CCS provides tools to manage a cluster of instances running Docker containers. Elastic Beanstalk takes care of Amazon CCS tasks including cluster creation, task definition, and execution Please refer to the below AWS documentation: https://docs.aws.amazon.com/elasticbeanstalk/latest/dg/create_deploy_docker_ecs.html

NEW QUESTION 10

You need to grant a vendor access to your AWS account. They need to be able to read protected messages in a private S3 bucket at their leisure. They also use AWS. What is the best way to accomplish this?

- A. Create an 1AM User with API Access Key

- B. Grant the User permissions to access the bucke

- C. Give the vendor the AWS Access Key ID and AWS Secret Access Key for the User.

- D. Create an EC2 Instance Profile on your accoun

- E. Grant the associated 1AM role full access to the bucke

- F. Start an EC2 instance with this Profile and give SSH access to the instance to the vendor.

- G. Create a cross-account I AM Role with permission to access the bucket, and grant permission to use the Role to the vendor AWS account.D- Generate a signed S3 PUT URL and a signed S3 PUT URL, both with wildcard values and 2 year duration

- H. Pass the URLs to the vendor.

Answer: C

Explanation:

You can use AWS Identity and Access Management (I AM) roles and AWS Security Token Service (STS) to set up cross-account access between AWS accounts. When you assume an 1AM role in another AWS account to obtain cross-account access to services and resources in that account, AWS CloudTrail logs the cross-account activity For more information on Cross Account Access, please visit the below URL:

• https://aws.amazon.com/blogs/security/tag/cross-account-access/

NEW QUESTION 11

You are planning on configuring logs for your Elastic Load balancer. At what intervals does the logs get produced by the Elastic Load balancer service. Choose 2 answers from the options given below

- A. 5minutes

- B. 60minutes

- C. 1 minute

- D. 30seconds

Answer: AB

Explanation:

The AWS Documentation mentions

Clastic Load Balancing publishes a log file for each load balancer node at the interval you specify. You can specify a publishing interval of either 5 minutes or 60 minutes when you enable the access log for your load balancer. By default. Elastic Load Balancing publishes logs at a 60-minute interval.

For more information on Elastic load balancer logs please see the below link: http://docs.aws.amazon.com/elasticloadbalancing/latest/classic/access-log-collection.html

NEW QUESTION 12

You need to perform ad-hoc business analytics queries on well-structured data. Data comes in

constantly at a high velocity. Your business intelligence team can understand SQL.

What AWS service(s) should you look to first?

- A. Kinesis Firehose + RDS

- B. Kinesis Firehose+RedShift

- C. EMR using Hive

- D. EMR running Apache Spark

Answer: B

Explanation:

Amazon Kinesis Firehose is the easiest way to load streaming data into AWS. It can capture, transform, and load streaming data into Amazon Kinesis Analytics, Amazon S3, Amazon Redshift, and Amazon Oasticsearch Sen/ice, enabling near real-time analytics with existing business intelligence tools and

dashboards you're already using today. It is a fully managed service that automatically scales to match the throughput of your data and requires no ongoing

administration. It can also batch, compress, and encrypt the data before loading it, minimizing the amount of storage used at the destination and increasing security.

For more information on Kinesis firehose, please visit the below URL:

• https://aws.amazon.com/kinesis/firehose/

Amazon Redshift is a fully managed, petabyte-scale data warehouse service in the cloud. You can start with just a few hundred gigabytes of data and scale to a petabyte or more. This enables you to use your data to acquire new insights for your business and customers. For more information on Redshift, please visit the below URL:

http://docs.aws.amazon.com/redshift/latest/mgmt/welcome.html

NEW QUESTION 13

You have deployed an Elastic Beanstalk application in a new environment and want to save the current state of your environment in a document. You want to be able to restore your environment to the current state later or possibly create a new environment. You also want to make sure you have a restore point. How can you achieve this?

- A. Use CloudFormation templates

- B. Configuration Management Templates

- C. Saved Configurations

- D. Saved Templates

Answer: C

Explanation:

You can save your environment's configuration as an object in Amazon S3 that can be applied to other environments during environment creation, or applied to a running environment. Saved configurations are YAML formatted templates that define an environment's platform configuration, tier, configuration option settings,

and tags.

For more information on Saved Configurations please refer to the below link:

• http://docs.aws.a mazon.com/elasticbeanstalk/latest/dg/envi ronment-configuration- savedconfig.html

NEW QUESTION 14

You are using Elastic beanstalk to deploy an application that consists of a web and application server. There is a requirement to run some python scripts before the application version is deployed to the web server. Which of the following can be used to achieve this?

- A. Makeuse of container commands

- B. Makeuse of Docker containers

- C. Makeuse of custom resources

- D. Makeuse of multiple elastic beanstalk environments

Answer: A

Explanation:

The AWS Documentation mentions the following

You can use the container_commands key to execute commands that affect your application source code. Container commands run after the application and web

server have been set up and the application version archive has been extracted, but before the application version is deployed. Non-container commands and other

customization operations are performed prior to the application source code being extracted. For more information on Container commands, please visit the below URL: http://docs.aws.amazon.com/elasticbeanstalk/latest/dg/customize-containers-ec2.htmI

NEW QUESTION 15

You have an I/O and network-intensive application running on multiple Amazon EC2 instances that cannot handle a large ongoing increase in traffic. The Amazon EC2 instances are using two Amazon EBS PIOPS volumes each, and each instance is identical.

Which of the following approaches should be taken in order to reduce load on the instances with the least disruption to the application?

- A. Createan AMI from each instance, and set up Auto Scaling groups with a largerinstance type that has enhanced networking enabled and is Amazon EBS-optimized.

- B. Stopeach instance and change each instance to a larger Amazon EC2 instance typethat has enhanced networking enabled and is Amazon EBS-optimize

- C. Ensure thatRAID striping is also set up on each instance.

- D. Addan instance-store volume for each running Amazon EC2 instance and implementRAID striping to improve I/O performance.

- E. Addan Amazon EBS volume for each running Amazon EC2 instance and implement RAIDstripingto improve I/O performance.

- F. Createan AMI from an instance, and set up an Auto Scaling group with an instance typethat has enhanced networking enabled and is Amazon EBS-optimized.

Answer: E

Explanation:

The AWS Documentation mentions the following on AMI's

An Amazon Machine Image (AMI) provides the information required to launch an instance, which is a virtual server in the cloud. You specify an AM I when you launch

an instance, and you can launch as many instances from the AMI as you need. You can also launch instances from as many different AMIs as you need.

For more information on AMI's, please visit the link:

• http://docs.aws.amazon.com/AWSCC2/latest/UserGuide/AMIs.html

NEW QUESTION 16

You are in charge of designing a Cloudformation template which deploys a LAMP stack. After deploying a stack, you see that the status of the stack is showing as CREATE_COMPLETE, but the apache server is still not up and running and is experiencing issues while starting up. You want to ensure that the stack creation only shows the status of CREATE_COMPLETE after all resources defined in the stack are up and running. How can you achieve this?

Choose 2 answers from the options given below.

- A. Definea stack policy which defines that all underlying resources should be up andrunning before showing a status of CREATE_COMPLETE.

- B. Uselifecycle hooks to mark the completion of the creation and configuration of theunderlying resource.

- C. Usethe CreationPolicy to ensure it is associated with the EC2 Instance resource.

- D. Usethe CFN helper scripts to signal once the resource configuration is complete.

Answer: CD

Explanation:

The AWS Documentation mentions

When you provision an Amazon EC2 instance in an AWS Cloud Formation stack, you might specify additional actions to configure the instance, such as install software packages or bootstrap applications. Normally, CloudFormation proceeds with stack creation after the instance has been successfully created. However, you can use a Creation Pol icy so that CloudFormation proceeds with stack creation only after your configuration actions are done. That way you'll know your applications are ready to go after stack creation succeeds.

For more information on the Creation Policy, please visit the below url https://aws.amazon.com/blogs/devops/use-a-creationpolicy-to-wait-for-on-instance-configurations/

NEW QUESTION 17

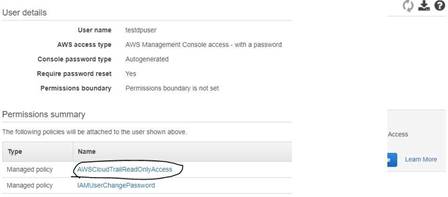

An audit is going to be conducted for your company's AWS account. Which of the following steps will ensure that the auditor has the right access to the logs of your AWS account

- A. Enable S3 and ELB log

- B. Send the logs as a zip file to the IT Auditor.

- C. Ensure CloudTrail is enable

- D. Create a user account for the Auditor and attach the AWSCLoudTrailReadOnlyAccess Policy to the user.

- E. Ensure that Cloudtrail is enable

- F. Create a user for the IT Auditor and ensure that full control is given to the userfor Cloudtrail.D- Enable Cloudwatch log

- G. Create a user for the IT Auditor and ensure that full control is given to the userfor the Cloudwatch logs.

Answer: B

Explanation:

The AWS Documentation clearly mentions the below

AWS CloudTrail is an AWS service that helps you enable governance, compliance, and operational and risk auditing of your AWS account. Actions taken by a user,

role, or an AWS service are recorded as events in CloudTrail. Events include actions taken in the AWS Management Console, AWS Command Line Interface, and AWS SDKs and APIs.

For more information on Cloudtrail, please visit the below URL:

• http://docs.aws.amazon.com/awscloudtrail/latest/userguide/cloudtrail-user-guide.html

NEW QUESTION 18

You work for a startup that has developed a new photo-sharing application for mobile devices. Over recent months your application has increased in popularity; this has resulted in a decrease in the performance of the application clue to the increased load. Your application has a two-tier architecture that is composed of an Auto Scaling PHP application tier and a MySQL RDS instance initially deployed with AWS Cloud Formation. Your Auto Scaling group has a min value of 4 and a max value of 8. The desired capacity is now at 8 because of the high CPU utilization of the instances. After some analysis, you are confident that the performance issues stem from a constraint in CPU capacity, although memory utilization remains low. You therefore decide to move from the general-purpose M3 instances to the compute-optimized C3 instances. How would you deploy this change while minimizing any interruption to your end users?

- A. Sign into the AWS Management Console, copy the old launch configuration, and create a new launch configuration that specifies the C3 instance

- B. Update the Auto Scalinggroup with the new launch configuratio

- C. Auto Scaling will then update the instance type of all running instances.

- D. Sign into the AWS Management Console, and update the existing launch configuration with the new C3 instance typ

- E. Add an UpdatePolicy attribute to your Auto Scaling group that specifies AutoScalingRollingUpdate.

- F. Update the launch configuration specified in the AWS CloudFormation template with the new C3 instance typ

- G. Run a stack update with the new templat

- H. Auto Scaling will then update the instances with the new instance type.

- I. Update the launch configuration specified in the AWS CloudFormation template with the new C3instance typ

- J. Also add an UpdatePolicy attribute to your Auto Scalinggroup that specifies AutoScalingRollingUpdat

- K. Run a stack update with the new template.

Answer: D

Explanation:

The AWS::AutoScaling::AutoScalingGroup resource supports an UpdatePoIicy attribute. This is used to define how an Auto Scalinggroup resource is updated when an update to the Cloud Formation stack occurs. A common approach to updating an Auto Scaling group is to perform a rolling update, which is done by specifying the AutoScalingRollingUpdate policy. This retains the same Auto Scaling group and replaces old instances with new ones, according to the parameters specified. For more information on rolling updates, please visit the below link:

• https://aws.amazon.com/premiumsupport/knowledge-center/auto-scaling-group-rolling- updates/

NEW QUESTION 19

You need to store a large volume of data. The data needs to be readily accessible for a short period, but then needs to be archived indefinitely after that. What is a cost-effective solution?

- A. Storeall the data in S3 so that it can be more cost effective

- B. Storeyour data in Amazon S3, and use lifecycle policies to archive to Amazon Glacier

- C. Storeyour data in an EBS volume, and use lifecycle policies to archive to AmazonGlacier.

- D. Storeyour data in Amazon S3, and use lifecycle policies to archive toS3-lnfrequently Access

Answer: B

Explanation:

The AWS documentation mentions the following on Lifecycle policies

Lifecycle configuration enables you to specify the lifecycle management of objects in a bucket. The configuration is a set of one or more rules, where each rule

defines an action for Amazon S3 to apply to a group of objects. These actions can be classified as follows:

Transition actions - In which you define when objects transition to another storage class. For example, you may choose to transition objects to the STANDARDJ A (IA, for infrequent access) storage class 30 days after creation, or archive objects to the GLACIER storage class one year after creation.

Expiration actions - In which you specify when the objects expire. Then Amazon S3 deletes the expired objects on your behalf. For more information on S3 Lifecycle policies, please visit the below URL

• http://docs.aws.a mazon.com/AmazonS3/latest/dev/object-lifecycle-mgmt.html

NEW QUESTION 20

An enterprise wants to use a third-party SaaS application running on AWS.. The SaaS application needs to have access to issue several API commands to discover Amazon EC2 resources running within the enterprise's account. The enterprise has internal security policies that require any outside access to their environment must conform to the principles of least privilege and there must be controls in place to ensure that the credentials used by the SaaS vendor cannot be used by any other third party. Which of the following would meet all of these conditions?

- A. From the AWS Management Console, navigate to the Security Credentials page and retrieve the access and secret key for your account.

- B. Create an 1AM user within the enterprise account assign a user policy to the 1AM user that allows only the actions required by the SaaS applicatio

- C. Create a new access and secret key for the user and provide these credentials to the SaaS provider.

- D. Create an 1AM role for cross-account access allows the SaaS provider's account to assume the role and assign it a policy that allows only the actions required by the SaaS application.

- E. Create an 1AM role for EC2 instances, assign it a policy that allows only the actions required tor the Saas application to work, provide the role ARN to the SaaS provider to use when launching their application instances.

Answer: C

Explanation:

Many SaaS platforms can access aws resources via a Cross account access created in aws. If you go to Roles in your identity management, you will see the ability to add a cross account role.

For more information on cross account role, please visit the below URL:

• http://docs.aws.amazon.com/IAM/latest/UserGuide/tuto rial_cross-account-with-roles.htm I

NEW QUESTION 21

......

Thanks for reading the newest DOP-C01 exam dumps! We recommend you to try the PREMIUM Dumps-files.com DOP-C01 dumps in VCE and PDF here: https://www.dumps-files.com/files/DOP-C01/ (116 Q&As Dumps)