Highest Quality SAP-C01 Torrent 2021

It is impossible to pass Amazon-Web-Services SAP-C01 exam without any help in the short term. Come to Testking soon and find the most advanced, correct and guaranteed Amazon-Web-Services SAP-C01 practice questions. You will get a surprising result by our Avant-garde AWS Certified Solutions Architect- Professional practice guides.

Free SAP-C01 Demo Online For Amazon-Web-Services Certifitcation:

NEW QUESTION 1

A company is implementing a multi-account strategy; however, the Management team has expressed concerns that services like DNS may become overly complex. The company needs a solution that allows private DNS to be shared among virtual private clouds (VPCs) in different accounts. The company will have approximately 50 accounts in total.

What solution would create the LEAST complex DNS architecture and ensure that each VPC can resolve all AWS resources?

- A. Create a shared services VPC in a central account, and create a VPC peering connection from the shared services VPC to each of the VPCs in the other account

- B. Within Amazon Route 53, create a privately hosted zone in the shared services VPC and resource record sets for the domain and subdomains.Programmatically associate other VPCs with the hosted zone.

- C. Create a VPC peering connection among the VPCs in all account

- D. Set the VPC attributes enableDnsHostnames and enableDnsSupport to “true” for each VP

- E. Create an Amazon Route 53 private zone for each VP

- F. Create resource record sets for the domain and subdomain

- G. Programmatically associate the hosted zones in each VPC with the other VPCs.

- H. Create a shared services VPC in a central accoun

- I. Create a VPC peering connection from the VPCs in other accounts to the shared services VP

- J. Create an Amazon Route 53 privately hosted zone in the shared services VPC with resource record sets for the domain and subdomain

- K. Allow UDP and TCP port 53 over the VPC peering connections.

- L. Set the VPC attributes enableDnsHostnames and enableDnsSupport to “false” in every VP

- M. Create an AWS Direct Connect connection with a private virtual interfac

- N. Allow UDP and TCP port 53 over the virtual interfac

- O. Use the on-premises DNS servers to resolve the IP addresses in each VPC on AWS.

Answer: A

Explanation:

https://aws.amazon.com/blogs/networking-and-content-delivery/centralized-dns-management-of-hybrid-cloud-w

NEW QUESTION 2

A company has developed a new billing application that will be released in two weeks. Developers are testing the application running on 10 EC2 instances managed by an Auto Scaling group in subnet 172.31.0.0/24 within VPC A with CIDR block 172.31.0.0/16. The Developers noticed connection timeout errors in the application logs while connecting to an Oracle database running on an Amazon EC2 instance in the same region within VPC B with CIDR block 172.50.0.0/16. The IP of the database instance is hard-coded in the application instances.

Which recommendations should a Solutions Architect present to the Developers to solve the problem in a secure way with minimal maintenance and overhead?

- A. Disable the SrcDestCheck attribute for all instances running the application and Oracle Database.Change the default route of VPC A to point ENI of the Oracle Database that has an IP address assigned within the range of 172.50.0.0/26

- B. Create and attach internet gateways for both VPC

- C. Configure default routes to the Internet gateways for both VPC

- D. Assign an Elastic IP for each Amazon EC2 instance in VPC A

- E. Create a VPC peering connection between the two VPCs and add a route to the routing table of VPC A that points to the IP address range of 172.50.0.0/16

- F. Create an additional Amazon EC2 instance for each VPC as a customer gateway; create one virtual private gateway (VGW) for each VPC, configure an end-to-end VPC, and advertise the routes for 172.50.0.0/16

Answer: C

NEW QUESTION 3

A bank is designing an online customer service portal where customers can chat with customer service agents. The portal is required to maintain a 15-minute RPO or RTO in case of a regional disaster. Banking regulations require that all customer service chat transcripts must be preserved on durable storage for at least 7 years, chat conversations must be encrypted in-flight, and transcripts must be encrypted at rest. The Data Lost Prevention team requires that data at rest must be encrypted using a key that the team controls, rotates, and revokes.

Which design meets these requirements?

- A. The chat application logs each chat message into Amazon CloudWatch Log

- B. A scheduled AWS Lambda function invokes a CloudWatch Log

- C. CreateExportTask every 5 minutes to export chat transcripts to Amazon S3. The S3 bucket is configured for cross-region replication to the backup regio

- D. Separate AWS KMS keys are specified for the CloudWatch Logs group and the S3 bucket.

- E. The chat application logs each chat message into two different Amazon CloudWatch Logs groups in two different regions, with the same AWS KMS key applie

- F. Both CloudWatch Logs groups are configured to export logs into an Amazon Glacier vault with a 7-year vault lock policy with a KMS key specified.

- G. The chat application logs each chat message into Amazon CloudWatch Log

- H. A subscription filter on the CloudWatch Logs group feeds into an Amazon Kinesis Data Firehose which streams the chat messages into an Amazon S3 bucket in the backup regio

- I. Separate AWS KMS keys are specified for the CloudWatch Logs group and the Kinesis Data Firehose.

- J. The chat application logs each chat message into Amazon CloudWatch Log

- K. The CloudWatch Logs group is configured to export logs into an Amazon Glacier vault with a 7-year vault lock polic

- L. Glacier cross-region replication mirrors chat archives to the backup regio

- M. Separate AWS KMS keys are specified for the CloudWatch Logs group and the Amazon Glacier vault.

Answer: B

NEW QUESTION 4

A company has a large on-premises Apache Hadoop cluster with a 20 PB HDFS database. The cluster is growing every quarter by roughly 200 instances and 1 PB. The company’s goals are to enable resiliency for its Hadoop data, limit the impact of losing cluster nodes, and significantly reduce costs. The current cluster runs 24/7 and supports a variety of analysis workloads, including interactive queries and batch processing.

Which solution would meet these requirements with the LEAST expense and down time?

- A. Use AWS Snowmobile to migrate the existing cluster data to Amazon S3. Create a persistent Amazon EMR cluster initially sized to handle the interactive workload based on historical data from theon-premises cluste

- B. Store the data on EMRF

- C. Minimize costs using Reserved Instances for master and core nodes and Spot Instances for task nodes, and auto scale task nodes based on Amazon CloudWatch metric

- D. Create job-specific, optimized clusters for batch workloads that are similarly optimized.

- E. Use AWS Snowmobile to migrate the existing cluster data to Amazon S3. Create a persistent Amazon EMR cluster of similar size and configuration to the current cluste

- F. Store the data on EMRF

- G. Minimize costs by using Reserved Instance

- H. As the workload grows each quarter, purchase additional Reserved Instances and add to the cluster.

- I. Use AWS Snowball to migrate the existing cluster data to Amazon S3. Create a persistent Amazon EMR cluster initially sized to handle the interactive workloads based on historical data from theon-premises cluste

- J. Store the on EMRF

- K. Minimize costs using Reserved Instances for master and core nodes and Spot Instances for task nodes, and auto scale task nodes based on Amazon CloudWatch metric

- L. Create job-specific, optimized clusters for batch workloads that are similarly optimized.

- M. Use AWS Direct Connect to migrate the existing cluster data to Amazon S3. Create a persistent Amazon EMR cluster initially sized to handle the interactive workload based on historical data from theon-premises cluste

- N. Store the data on EMRF

- O. Minimize costs using Reserved Instances for master and core nodes and Spot Instances for task nodes, and auto scale task nodes based on Amazon CloudWatch metric

- P. Create job-specific, optimized clusters for batch workloads that are similarly optimized.

Answer: A

Explanation:

Q: How should I choose between Snowmobile and Snowball?

To migrate large datasets of 10PB or more in a single location, you should use Snowmobile. For datasets less than 10PB or distributed in multiple locations, you should use Snowball. In addition, you should evaluate the amount of available bandwidth in your network backbone. If you have a high speed backbone with hundreds of Gb/s of spare throughput, then you can use Snowmobile to migrate the large datasets all at once. If you have limited bandwidth on your backbone, you should consider using multiple Snowballs to migrate the data incrementally.

NEW QUESTION 5

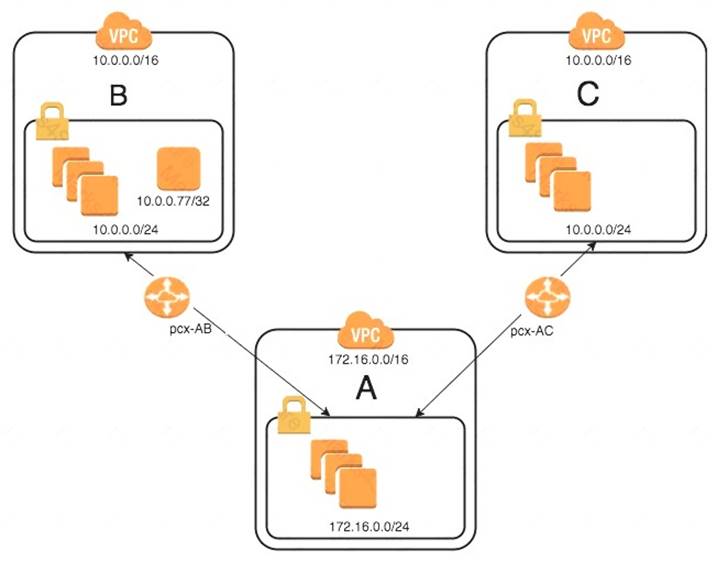

An organization has recently grown through acquisitions. Two of the purchased companies use the same IP CIDR range. There is a new short-term requirement to allow AnyCompany A (VPC-A) to communicate with a server that has the IP address 10.0.0.77 in AnyCompany B (VPC-B). AnyCompany A must also communicate with all resources in AnyCompany C (VPC-C). The Network team has created the VPC peer links, but it is having issues with communications between VPC-A and VPC-B. After an investigation, the team believes that the routing tables in the VPCs are incorrect.

What configuration will allow AnyCompany A to communicate with AnyCompany C in addition to the database in AnyCompany B?

- A. On VPC-A, create a static route for the VPC-B CIDR range (10.0.0.0/24) across VPC peerpcx-AB.Create a static route of 10.0.0.0/16 across VPC peer pcx-AC.On VPC-B, create a static route for VPC-A CIDR (172.16.0.0/24) on peer pcx-AB.On VPC-C, create a static route for VPC-A CIDR (172.16.0.0/24) across peer pcx-AC.

- B. On VPC-A, enable dynamic route propagation on pcx-AB and pcx-AC.On VPC-B, enable dynamic route propagation and use security groups to allow only the IP address 10.0.0.77/32 on VPC peer pcx-AB.On VPC-C, enable dynamic route propagation with VPC-A on peer pcx-AC.

- C. On VPC-A, create network access control lists that block the IP address 10.0.0.77/32 on VPC peerpcx-AC.On VPC-A, create a static route for VPC-B CIDR (10.0.0.0/24) on pcx-AB and a static route for VPC-C CIDR (10.0.0.0/24) on pcx-AC.On VPC-B, create a static route for VPC-A CIDR (172.16.0.0/24) across peer pcx-AB.On VPC-C, create a static route for VPC-A CIDR (172.16.0.0/24) across peer pcx-AC.

- D. On VPC-A, create a static route for the VPC-B CIDR (10.0.0.77/32) database across VPC peerpcx-AB.Create a static route for the VPC-C CIDR on VPC peer pcx-AC.On VPC-B, create a static route for VPC-A CIDR (172.16.0.0/24) on peer pcx-AB.On VPC-C, create a static route for VPC-A CIDR (172.16.0.0/24) across peer pcx-AC.

Answer: D

NEW QUESTION 6

A company uses an Amazon EMR cluster to process data once a day. The raw data comes from Amazon S3, and the resulting processed data is also stored in Amazon S3. The processing must complete within 4 hours; currently, it only takes 3 hours. However, the processing time is taking 5 to 10 minutes. longer each week due to an increasing volume of raw data.

The team is also concerned about rising costs as the compute capacity increases. The EMR cluster is currently running on three m3.xlarge instances (one master and two core nodes).

Which of the following solutions will reduce costs related to the increasing compute needs?

- A. Add additional task nodes, but have the team purchase an all-upfront convertible Reserved Instance for each additional nod e to offset the costs.

- B. Add additional task nodes, but use instance fleets with the master node in on-Demand mode and a mix of On-Demand and Spot Instances for the core and task node

- C. Purchase a scheduled Reserved Instances for the master node.

- D. Add additional task nodes, but use instance fleets with the master node in Spot mode and a mix of On-Demand and Spot Instances for the core and task node

- E. Purchase enough scheduled Reserved Instances to offset the cost of running any On-Demand instances.

- F. Add additional task nodes, but use instance fleets with the master node in On-Demand mode and a mix of On-Demand and Spot Instances for the core and task node

- G. Purchase a standard all-upfront Reserved Instance for the master node.

Answer: B

NEW QUESTION 7

A company has an application behind a load balancer with enough Amazon EC2 instances to satisfy peak demand. Scripts and third-party deployment solutions are used to configure EC2 instances when demand increases or an instance fails. The team must periodically evaluate the utilization of the instance types to ensure that the correct sizes are deployed.

How can this workload be optimized to meet these requirements?

- A. Use CloudFormer` to create AWS CloudFormation stacks from the current resource

- B. Deploy that stack by using AWS CloudFormation in the same regio

- C. Use Amazon CloudWatch alarms to send notifications about underutilized resources to provide cost-savings suggestions.

- D. Create an Auto Scaling group to scale the instances, and use AWS CodeDeploy to perform the configuratio

- E. Change from a load balancer to an Application Load Balance

- F. Purchase a third-party product that provides suggestions for cost savings on AWS resources.

- G. Deploy the application by using AWS Elastic Beanstalk with default option

- H. Register for an AWS Support Developer pla

- I. Review the instance usage for the application by using Amazon CloudWatch, and identify less expensive instances that can handle the loa

- J. Hold monthly meetings to review new instance types and determine whether Reserved instances should be purchased.

- K. Deploy the application as a Docker image by using Amazon EC

- L. Set up Amazon EC2 Auto Scaling and Amazon ECS scalin

- M. Register for AWS Business Support and use Trusted Advisor checks to provide suggestions on cost savings.

Answer: D

NEW QUESTION 8

A company has a requirement that only allows specially hardened AMIs to be launched into public subnets in a VPC, and for the AMIs to be associated with a specific security group. Allowing non-compliant instances to launch into the public subnet could present a significant security risk if they are allowed to operate.

A mapping of approved AMIs to subnets to security groups exists in an Amazon DynamoDB table in the same AWS account. The company created an AWS Lambda function that, when invoked, will terminate a given Amazon EC2 instance if the combination of AMI, subnet, and security group are not approved in the DynamoDB table.

What should the Solutions Architect do to MOST quickly mitigate the risk of compliance deviations?

- A. Create an Amazon CloudWatch Events rule that matches each time an EC2 instance is launched usingone of the allowed AMIs, and associate it with the Lambda function as the target.

- B. For the Amazon S3 bucket receiving the Aws CloudTrail logs, create an S3 event notification configuration with a filter to match when logs contain the ec2:RunInstances action, and associate it with the Lambda function as the target.

- C. Enable AWS CloudTrail and configure it to stream to an Amazon CloudWatch Logs grou

- D. Create a metric filter in CloudWatch to match when the ec2:RunInstances action occurs, and trigger the Lambda function when the metric is greater than 0.

- E. Create an Amazon CloudWatch Events rule that matches each time an EC2 instance is launched, and associate it with the Lambda function as the target.

Answer: C

Explanation:

https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/ec2-instance-lifecycle.html

NEW QUESTION 9

While debugging a backend application for an loT system that supports globally distributed devices a Solutions Architect notices that stale data is occasionally being sent to user devices. Devices often share data, and stale data does not cause issues in most cases However device operations are disrupted when a device reads the stale data after an update

The global system has multiple identical application stacks deployed In different AWS Regions If a user device travels out of its home geographic region it will always connect to the geographically closest AWS Region to write or read data The same data is available in all supported AWS Regions using an Amazon DynamoDB global table

What change should be made to avoid causing disruptions in device operations'?

- A. Update the backend to use strongly consistent read

- B. Update the devices to always write to and read from their home AWS Region

- C. Enable strong consistency globally on a DynamoDB global table Update the backend to use strongly consistent reads

- D. Switch the backend data store to Amazon Aurora MySQL with cross-region replicas Update the backend to always write to the master endpoint

- E. Select one AWS Region as a master and perform all writes in that AWS Region only Update the backend to use strongly consistent reads

Answer: B

NEW QUESTION 10

A company is using an Amazon CloudFront distribution to distribute both static and dynamic content from a web application running behind an Application Load Balancer. The web application requires user authorization and session tracking for dynamic content. The CloudFront distribution has a single cache behavior configured to forward the Authorization, Host, and User-Agent HTTP whitelist headers and a session cookie to the origin. All other cache behavior settings are set to their default value.

A valid ACM certificate is applied to the CloudFront distribution with a matching CNAME in the distribution settings. The ACM certificate is also applied to the HTTPS listener for the Application Load Balancer. The CloudFront origin protocol policy is set to HTTPS only. Analysis of the cache statistics report shows that the miss rate for this distribution is very high.

What can the Solutions Architect do to improve the cache hit rate for this distribution without causing the SSL/TLS handshake between CloudFront and the Application Load Balancer to fail?

- A. Create two cache behaviors for static and dynamic conten

- B. Remove the User-Agent and Host HTTP headers from the whitelist headers section on both if the cache behavior

- C. Remove the session cookie from the whitelist cookies section and the Authorization HTTP header from the whitelist headers section for cache behavior configured for static content.

- D. Remove the User-Agent and Authorization HTTP headers from the whitelist headers section of the cache behavio

- E. Then update the cache behavior to use presigned cookies for authorization.

- F. Remove the Host HTTP header from the whitelist headers section and remove the session cookie from the whitelist cookies section for the default cache behavio

- G. Enable automatic object compression and use Lambda@Edge viewer request events for user authorization.

- H. Create two cache behaviors for static and dynamic conten

- I. Remove the User-Agent HTTP header from the whitelist headers section on both of the cache behavior

- J. Remove the session cookie from the whitelist cookies section and the Authorization HTTP header from the whitelist headers section for cache behavior configured for static content.

Answer: D

NEW QUESTION 11

A company is migrating its marketing website and content management system from an on-premises data center to AWS. The company wants the AWS application to be developed in a VPC with Amazon EC2 instances used for the web servers and an Amazon RDS instance for the database.

The company has a runbook document that describes the installation process of the on-premises system. The company would like to base the AWS system on the processes referenced in the runbook document. The runbook document describes the installation and configuration of the operating systems, network settings, the website, and content management system software on the servers. After the migration is complete, the company wants to be able to make changes quickly to take advantage of other AWS features.

How can the application and environment be deployed and automated in AWS, while allowing for future changes?

- A. Update the runbook to describe how to create the VPC, the EC2 instances, and the RDS instance for the application by using the AWS Consol

- B. Make sure that the rest of the steps in the runbook are updated to reflect any changes that may come from the AWS migration.

- C. Write a Python script that uses the AWS API to create the VPC, the EC2 instances, and the RDS instance for the applicatio

- D. Write shell scripts that implement the rest of the steps in the runboo

- E. Have the Python script copy and run the shell scripts on the newly created instances to complete the installation.

- F. Write an AWS CloudFormation template that creates the VPC, the EC2 instances, and the RDS instance for the applicatio

- G. Ensure that the rest of the steps in the runbook are updated to reflect any changes that may come from the AWS migration.

- H. Write an AWS CloudFormation template that creates the VPC, the EC2 instances, and the RDS instance for the applicatio

- I. Include EC2 user data in the AWS CloudFormation template to install and configure the software.

Answer: D

NEW QUESTION 12

An internal security audit of AWS resources within a company found that a number of Amazon EC2 instances running Microsoft Windows workloads were missing several important operating system-level patches. A Solutions Architect has been asked to fix existing patch deficiencies, and to develop a workflow to ensure that future patching requirements are identified and taken care of quickly. The Solutions Architect has decided to use AWS Systems Manager. It is important that EC2 instance reboots do not occur at the same time on all Windows workloads to meet organizational uptime requirements.

Which workflow will meet these requirements in an automated manner?

- A. Add a Patch Group tag with a value of Windows Servers to all existing EC2 instance

- B. Ensure that all Windows EC2 instances are assigned this ta

- C. Associate the AWS-DefaultPatchBaseline to the Windows servers patch grou

- D. Define an AWS Systems Manager maintenance window, conduct patching within it, and associate it with the Windows Servers patch grou

- E. Register instances with the maintenance window using associated subnet ID

- F. Assign the AWS-RunPatchBaseline document as a task within each maintenance window.

- G. Add a Patch Group tag a value of Windows Servers to all existing EC2 instance

- H. Ensure that all Windows EC2 instances are assigned this ta

- I. Associate the AWS-WindowsPatchBaseline document as a task associated with the Windows Servers patch grou

- J. Create an Amazon CloudWatch Events rule configured to use a cron expression to schedule the execution of patching using the AWS Systems Manager run comman

- K. Create an AWS Systems Manager State Manager document to define commands to be executed during patch execution.

- L. Add a Patch Group tag with a value of either Windows Servers1 or Windows Server2 to all existing EC2 instance

- M. Ensure that all Windows EC2 instances are assigned this ta

- N. Associate theAWS-DefaultPatchBaseline with both Windows Servers patch group

- O. Define two non-overlappingAWS Systems Manager maintenance windows, conduct patching within them, and associate each with a different patch grou

- P. Register targets with specific maintenance windows using the Patch Group tag

- Q. Assign the AWS-RunPatchBaseline document as a task within each maintenance window.

- R. Add a Patch Group tag with a value of either Windows servers1 or Windows Server2 to all existing EC2 instance

- S. Ensure that all Windows EC2 instances are assigned this ta

- T. Associate theAWS-WindowsPatchBaseline with both Windows Servers patch group

- . Define two non-overlappingAWS Systems Manager maintenance windows, conduct patching within them, and associate each with a different patch grou

- . Assign the AWS-RunWindowsPatchBaseline document as a task within each maintenance windo

- . Create an AWS Systems Manager State Manager document to define commands to be executed during patch execution.

Answer: C

NEW QUESTION 13

A company is moving a business-critical, multi-tier application to AWS. The architecture consists of a desktop client application and server infrastructure. The server infrastructure resides in an on-premises data center that frequently fails to maintain the application uptime SLA of 99.95%. A Solutions Architect must re-architect the application to ensure that it can meet or exceed the SLA.

The application contains a PostgreSQL database running on a single virtual machine. The business logic and presentation layers are load balanced between multiple virtual machines. Remote users complain about slow load times while using this latency-sensitive application.

Which of the following will meet the availability requirements with little change to the application while improving user experience and minimizing costs?

- A. Migrate the database to a PostgreSQL database in Amazon EC2. Host the application and presentation layers in automatically scaled Amazon ECS containers behind an Application Load Balance

- B. Allocate an Amazon WorkSpaces WorkSpace for each end user to improve the user experience.

- C. Migrate the database to an Amazon RDS Aurora PostgreSQL configuratio

- D. Host the application and presentation layers in an Auto Scaling configuration on Amazon EC2 instances behind an Application Load Balance

- E. Use Amazon AppStream 2.0 to improve the user experience.

- F. Migrate the database to an Amazon RDS PostgreSQL Multi-AZ configuratio

- G. Host the application andpresentation layers in automatically scaled AWS Fargate containers behind a Network Load Balance

- H. Use Amazon ElastiCache to improve the user experience.

- I. Migrate the database to an Amazon Redshift cluster with at least two node

- J. Combine and host the application and presentation layers in automatically scaled Amazon ECS containers behind an Application Load Balance

- K. Use Amazon CloudFront to improve the user experience.

Answer: B

NEW QUESTION 14

A company has been using a third-party provider for its content delivery network and recently decided to switch to Amazon CloudFront the Development team wants to maximize performance for the global user base. The company uses a content management system (CMS) that serves both static and dynamic content. The CMS is both md an Application Load Balancer (ALB) which is set as the default origin for the distribution. Static assets are served from an Amazon S3 bucket. The Origin Access Identity (OAI) was created property d the S3 bucket policy has been updated to allow the GetObject action from the OAI, but static assets are receiving a 404 error

Which combination of steps should the Solutions Architect take to fix the error? (Select TWO. )

- A. Add another origin to the CloudFront distribution for the static assets

- B. Add a path based rule to the ALB to forward requests for the static assets

- C. Add an RTMP distribution to allow caching of both static and dynamic content

- D. Add a behavior to the CloudFront distribution for the path pattern and the origin of the static assets

- E. Add a host header condition to the ALB listener and forward the header from CloudFront to add traffic to the allow list

Answer: AD

NEW QUESTION 15

A company has created an account for individual Development teams, resulting in a total of 200 accounts. All accounts have a single virtual private cloud (VPC) in a single region with multiple microservices running in Docker containers that need to communicate with microservices in other accounts. The Security team requirements state that these microservices must not traverse the public internet, and only certain internal services should be allowed to call other individual services. If there is any denied network traffic for a service, the Security team must be notified of any denied requests, including the source IP.

How can connectivity be established between services while meeting the security requirements?

- A. Create a VPC peering connection between the VPC

- B. Use security groups on the instances to allow traffic from the security group IDs that are permitted to call the microservic

- C. Apply network ACLs to and allow traffic from the local VPC and peered VPCs onl

- D. Within the task definition in Amazon ECS for each of the microservices, specify a log configuration by using the awslogs drive

- E. Within Amazon CloudWatch Logs, create a metric filter and alarm off of the number of HTTP 403 response

- F. Create an alarm when the number of messages exceeds a threshold set by the Security team.

- G. Ensure that no CIDR ranges are overlapping, and attach a virtual private gateway (VGW) to each VPC.Provision an IPsec tunnel between each VGW and enable route propagation on the route tabl

- H. Configure security groups on each service to allow the CIDR ranges of the VPCs on the other account

- I. Enable VPC Flow Logs, and use an Amazon CloudWatch Logs subscription filter for rejected traffi

- J. Create an IAM role and allow the Security team to call the AssumeRole action for each account.

- K. Deploy a transit VPC by using third-party marketplace VPN appliances running on Amazon EC2, dynamically routed VPN connections between the VPN appliance, and the virtual private gateways (VGWs) attached to each VPC within the regio

- L. Adjust network ACLs to allow traffic from the local VPC onl

- M. Apply security groups to the microservices to allow traffic from the VPN appliances onl

- N. Install the awslogs agent on each VPN appliance, and configure logs to forward to Amazon CloudWatch Logs in the security account for the Security team to access.

- O. Create a Network Load Balancer (NLB) for each microservic

- P. Attach the NLB to a PrivateLink endpoint service and whitelist the accounts that will be consuming this servic

- Q. Create an interface endpoint in the consumer VPC and associate a security group that allows only the security group IDs of the services authorized to call the producer servic

- R. On the producer services, create security groups for each microservice and allow only the CIDR range the allowed service

- S. Create VPC Flow Logs on each VPC to capture rejected traffic that will be delivered to an Amazon CloudWatch Logs grou

- T. Create a CloudWatch Logs subscription that streams the log data to a security account.

Answer: D

Explanation:

AWS PrivateLink provides private connectivity between VPCs, AWS services, and on-premises applications, securely on the Amazon network. AWS PrivateLink makes it easy to connect services across different accounts and VPCs to significantly simplify the network architecture. It seems like the next VPC peering. https://aws.amazon.com/privatelink/

NEW QUESTION 16

A company is currently running a production workload on AWS that is very I/O intensive. Its workload consists of a single tier with 10 c4.8xlarge instances, each with 2 TB gp2 volumes. The number of processing jobs has recently increased, and latency has increased as well. The team realizes that they are constrained on the IOPS. For the application to perform efficiently, they need to increase the IOPS by 3,000 for each of the instances.

Which of the following designs will meet the performance goal MOST cost effectively?

- A. Change the type of Amazon EBS volume from gp2 to io1 and set provisioned IOPS to 9,000.

- B. Increase the size of the gp2 volumes in each instance to 3 TB.

- C. Create a new Amazon EFS file system and move all the data to this new file syste

- D. Mount this file system to all 10 instances.

- E. Create a new Amazon S3 bucket and move all the data to this new bucke

- F. Allow each instance to access this S3 bucket and use it for storage.

Answer: B

NEW QUESTION 17

A company has deployed an application to multiple environments in AWS, including production and testing. The company has separate accounts for production and testing, and users are allowed to create additional application users for team members or services, as needed. The Security team has asked the Operations team for better isolation between production and testing with centralized controls on security credentials and improved management of permissions between environments.

Which of the following options would MOST securely accomplish this goal?

- A. Create a new AWS account to hold user and service accounts, such as an identity accoun

- B. Create users and groups in the identity accoun

- C. Create roles with appropriate permissions in the production and testing account

- D. Add the identity account to the trust policies for the roles.

- E. Modify permissions in the production and testing accounts to limit creating new IAM users to members of the Operations tea

- F. Set a strong IAM password policy on each accoun

- G. Create new IAM users and groups in each account to limit developer access to just the services required to complete their job function.

- H. Create a script that runs on each account that checks user accounts for adherence to a security policy.Disable any user or service accounts that do not comply.

- I. Create all user accounts in the production accoun

- J. Create roles for access in the production account and testing account

- K. Grant cross-account access from the production account to the testing account.

Answer: A

Explanation:

https://aws.amazon.com/blogs/security/how-to-centralize-and-automate-iam-policy-creation-in-sandbox-develop

NEW QUESTION 18

An advisory firm is creating a secure data analytics solution for its regulated financial services users Users will upload their raw data to an Amazon 53 bucket, where they have PutObject permissions only Data will be analyzed by applications running on an Amazon EMR cluster launched in a VPC The firm requires that the environment be isolated from the internet All data at rest must be encrypted using keys controlled by the firm

Which combination of actions should the Solutions Architect take to meet the user's security requirements? (Select TWO )

- A. Launch the Amazon EMR cluster m a private subnet configured to use an AWS KMS CMK for at-rest encryption Configure a gateway VPC endpoint (or Amazon S3 and an interlace VPC endpoint for AWS KMS

- B. Launch the Amazon EMR cluster in a private subnet configured to use an AWS KMS CMK for at-rest encryption Configure a gateway VPC endpomint for Amazon S3 and a NAT gateway to access AWS KMS

- C. Launch the Amazon EMR cluster in a private subnet configured to use an AWS CloudHSM appliance for at-rest encryption Configure a gateway VPC endpoint for Amazon S3 and an interface VPC endpoint for CloudHSM

- D. Configure the S3 endpoint policies to permit access to the necessary data buckets only

- E. Configure the S3 bucket polices lo permit access using an aws sourceVpce condition lo match the S3 endpoint ID

Answer: AC

NEW QUESTION 19

A Solutions Architect must migrate an existing on-premises web application with 70 TB of static files supporting a public open-data initiative. The architect wants to upgrade to the latest version of the host operating system as part of the migration effort.

Which is the FASTEST and MOST cost-effective way to perform the migration?

- A. Run a physical-to-virtual conversion on the application serve

- B. Transfer the server image over the internet, and transfer the static data to Amazon S3.

- C. Run a physical-to-virtual conversion on the application serve

- D. Transfer the server image over AWS Direct Connect, and transfer the static data to Amazon S3.

- E. Re-platform the server to Amazon EC2, and use AWS Snowball to transfer the static data to Amazon S3.

- F. Re-platform the server by using the AWS Server Migration Service to move the code and data to a new Amazon EC2 instance.

Answer: C

NEW QUESTION 20

A company is using AWS CloudFormation to deploy its infrastructure. The company is concerned that, if a production CloudFormation stack is deleted, important data stored in Amazon RDS databases or Amazon EBS volumes might also be deleted.

How can the company prevent users from accidentally deleting data in this way?

- A. Modify the CloudFormation templates to add a DeletionPolicy attribute to RDS and EBS resources.

- B. Configure a stack policy that disallows the deletion of RDS and EBS resources.

- C. Modify IAM policies to deny deleting RDS and EBS resources that are tagged with an “aws:cloudformation:stack-name” tag.

- D. Use AWS Config rules to prevent deleting RDS and EBS resources.

Answer: A

Explanation:

With the DeletionPolicy attribute you can preserve or (in some cases) backup a resource when its stack is deleted. You specify a DeletionPolicy attribute for each resource that you want to control. If a resource has no DeletionPolicy attribute, AWS CloudFormation deletes the resource by default. To keep a resource when its stack is deleted, specify Retain for that resource. You can use retain for any resource. For example, you can retain a nested stack, Amazon S3 bucket, or EC2 instance so that you can continue to use or modify those resources after you delete their stacks. https://docs.aws.amazon.com/AWSCloudFormation/latest/UserGuide/aws-attribute-deletionpolicy.html

NEW QUESTION 21

A Solutions Architect is designing the storage layer for a recently purchased application. The application will be running on Amazon EC2 instances and has the following layers and requirements: Data layer: A POSIX file system shared across many systems.

Data layer: A POSIX file system shared across many systems. Service layer: Static file content that requires block storage with more than 100k IOPS. Which combination of AWS services will meet these needs? (Choose two.)

Service layer: Static file content that requires block storage with more than 100k IOPS. Which combination of AWS services will meet these needs? (Choose two.)

- A. Data layer – Amazon S3

- B. Data layer – Amazon EC2 Ephemeral Storage

- C. Data layer – Amazon EFS

- D. Service layer – Amazon EBS volumes with Provisioned IOPS

- E. Service layer – Amazon EC2 Ephemeral Storage

Answer: CE

Explanation:

https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/storage-optimized-instances.html

NEW QUESTION 22

A company has a data center that must be migrated to AWS as quickly as possible. The data center has a 500 Mbps AWS Direct Connect link and a separate, fully available 1 Gbps ISP connection. A Solutions Architect must transfer 20 TB of data from the data center to an Amazon S3 bucket.

What is the FASTEST way transfer the data?

- A. Upload the data to the S3 bucket using the existing DX link.

- B. Send the data to AWS using the AWS Import/Export service.

- C. Upload the data using an 80 TB AWS Snowball device.

- D. Upload the data to the S3 bucket using S3 Transfer Acceleration.

Answer: D

Explanation:

https://aws.amazon.com/s3/faqs/

NEW QUESTION 23

A company is finalizing the architecture for its backup solution for applications running on AWS. All of the applications run on AWS and use at least two Availability Zones in each tier.

Company policy requires IT to durably store nightly backups for all its data in at least two locations: production and disaster recovery. The locations must be in different geographic regions. The company also needs the backup to be available to restore immediately at the production data center, and within 24 hours at the disaster recovery location. All backup processes must be fully automated.

What is the MOST cost-effective backup solution that will meet all requirements?

- A. Back up all the data to a large Amazon EBS volume attached to the backup media server in the production regio

- B. Run automated scripts to snapshot these volumes nightly, and copy these snapshots to the disaster recovery region.

- C. Back up all the data to Amazon S3 in the disaster recovery regio

- D. Use a lifecycle policy to move this data to Amazon Glacier in the production region immediatel

- E. Only the data is replicated; remove the data from the S3 bucket in the disaster recovery region.

- F. Back up all the data to Amazon Glacier in the production regio

- G. Set up cross-region replication of this data to Amazon Glacier in the disaster recovery regio

- H. Set up a lifecycle policy to delete any data older than 60 days.

- I. Back up all the data to Amazon S3 in the production regio

- J. Set up cross-region replication of this S3 bucket to another region and set up a lifecycle policy in the second region to immediately move this data to Amazon Glacier.

Answer: D

NEW QUESTION 24

......

P.S. Easily pass SAP-C01 Exam with 179 Q&As Certifytools Dumps & pdf Version, Welcome to Download the Newest Certifytools SAP-C01 Dumps: https://www.certifytools.com/SAP-C01-exam.html (179 New Questions)