Improved SAA-C01 Torrent 2021

Certleader offers free demo for SAA-C01 exam. "AWS Certified Solutions Architect - Associate", also known as SAA-C01 exam, is a Amazon-Web-Services Certification. This set of posts, Passing the Amazon-Web-Services SAA-C01 exam, will help you answer those questions. The SAA-C01 Questions & Answers covers all the knowledge points of the real exam. 100% real Amazon-Web-Services SAA-C01 exams and revised by experts!

Online SAA-C01 free questions and answers of New Version:

NEW QUESTION 1

Location of Instances is _____

- A. Regional

- B. based on Availability Zone

- C. Global

Answer: B

Explanation:

Regions and Availability Zones

Amazon EC2 is hosted in multiple locations world-wide. These locations are composed of regions and Availability Zones. Each region is a separate geographic area. Each region has multiple, isolated locations known as Availability Zones. Amazon EC2 provides you the ability to place resources, such as instances, and data in multiple locations. Resources aren't replicated across regions unless you do so specifically. http://docs.aws.amazon.com/AWSEC2/latest/UserGuide/using-regions-availabilityzones. html#concepts-regions- availability-zones

NEW QUESTION 2

A customer’s security team requires the logging of all network access attempts to Amazon EC2 instances in their production VPC on AWS.

Which configuration will meet the security team’s requirement?

- A. Enable CloudTrail for the production VPC.

- B. Enable VPC Flow Logs for the production VPC.

- C. Enable both CloudTrail and VPC Flow Logs for the production VPC.

- D. Enable both CloudTrail and VPC Flow Logs for the AWS account.

Answer: B

NEW QUESTION 3

Which Auto Scaling features allow you to scale ahead of expected increases in load? (Select TWO.)

- A. Cooldown period

- B. Lifecycle hooks

- C. Desired capacity

- D. Scheduled scaling

- E. Health check grace period

- F. Metric-based scaling

Answer: DF

NEW QUESTION 4

A Solutions Architect is designing an application on AWS that uses persistent block storage. Data must be encrypted at rest.

Which solution meets the requirement?

- A. Enable SSL on Amazon EC2 instances.

- B. Encrypt Amazon EBS volumes on Amazon EC2 instances.

- C. Enable server-side encryption on Amazon S3.

- D. Encrypt Amazon EC2 Instance Storag

Answer: B

Explanation:

Reference https://aws.amazon.com/blogs/aws/protect-your-data-with-new-ebs-encryption/

NEW QUESTION 5

The AWS CloudHSM service defines a resource known as a high-availability (HA) ____, which is a virtual partition that represents a group of partitions, typically distributed between several physical HSMs for high-availability.

- A. proxy group

- B. partition group

- C. functional group

- D. relational group

Answer: B

Explanation:

The AWS CloudHSM service defines a resource known as a high-availability (HA) partition group, which is a virtual partition that represents a group of partitions, typically distributed between several physical HSMs for high-availability.

NEW QUESTION 6

Your customer is willing to consolidate their log streams (access logs application logs security logs etc.) in one single system. Once consolidated, the customer wants to analyze these logs in real time based on heuristics. From time to time, the customer needs to validate heuristics, which requires going back to data samples extracted from the last 12 hours?

What is the best approach to meet your customer’s requirements?

- A. Send all the log events to Amazon SQ

- B. Setup an Auto Scaling group of EC2 servers to consume the logs and apply the heuristics.

- C. Send all the log events to Amazon Kinesis develop a client process to apply heuristics on the logs

- D. Configure Amazon Cloud Trail to receive custom logs, use EMR to apply heuristics the logs

- E. Setup an Auto Scaling group of EC2 syslogd servers, store the logs on S3 use EMR to apply heuristics on the logs

Answer: B

Explanation:

Amazon Kinesis Streams allows for real-time data processing. With Amazon Kinesis Streams, you can continuously collect data as it is generated and promptly react to critical information about your business and operations.

https://aws.amazon.com/kinesis/streams/

NEW QUESTION 7

Using SAML (Security Assertion Markup Language 2.0) you can give your federated users single sign Questions & Answers PDF P-206

on (SSO) access to the AWS Management Console.

- A. True

- B. False

Answer: A

NEW QUESTION 8

In reviewing the Auto Scaling events for your application you notice that your application is scaling up and down multiple times in the same hour. What design choice could you make to optimize for cost while preserving elasticity?

Choose 2 answers

- A. Modify the Auto Scaling policy to use scheduled scaling actions

- B. Modify the Auto Scaling group termination policy to terminate the oldest instance first.

- C. Modify the Auto Scaling group cool-down timers.

- D. Modify the Amazon CloudWatch alarm period that triggers your Auto Scaling scale down policy.

- E. Modify the Auto Scaling group termination policy to terminate the newest instance firs

Answer: CD

NEW QUESTION 9

What is the Reduced Redundancy option in Amazon S3?

- A. Less redundancy for a lower cost.

- B. It doesn't exist in Amazon S3, but in Amazon EBS.

- C. It allows you to destroy any copy of your files outside a specific jurisdiction.

- D. It doesn't exist at all

Answer: A

NEW QUESTION 10

What does the "Server Side Encryption" option on Amazon S3 provide?

- A. It provides an encrypted virtual disk in the Cloud.

- B. It doesn't exist for Amazon S3, but only for Amazon EC2.

- C. It encrypts the files that you send to Amazon S3, on the server side.

- D. It allows to upload files using an SSL endpoint, for a secure transfe

Answer: C

Explanation:

Server-side encryption is about protecting data at rest. Server-side encryption with Amazon S3- managed encryption keys (SSE-S3) employs strong multi-factor encryption.

Amazon S3 encrypts each object with a unique key. As an additional safeguard, it encrypts the key itself with a master key that it regularly rotates. Amazon S3 server-side encryption uses one of the strongest block ciphers available, 256-bit Advanced Encryption Standard (AES-256), to encrypt your data.

References:

NEW QUESTION 11

Your customer wishes to deploy an enterprise application to AWS which will consist of several web servers, several application servers and a small (50GB) Oracle database information is stored, both in the database and the file systems of the various servers. The backup system must support database recovery whole server and whole disk restores, and individual file restores with a recovery time of no more than two hours. They have chosen to use RDS Oracle as the database.

Which backup architecture will meet these requirements?

- A. Backup RDS using automated daily DB backups Backup the EC2 instances using AMIs and supplement with file-level backup to S3 using traditional enterprise backup software to provide file level restore

- B. Backup RDS using a Multi-AZ Deployment Backup the EC2 instances using Amis, and supplement by copying file system data to S3 to provide file level restore.

- C. Backup RDS using automated daily DB backups Backup the EC2 instances using EBS snapshots and supplement with file-level backups to Amazon Glacier using traditional enterprise backup software to provide file level restore

- D. Backup RDS database to S3 using Oracle RMAN Backup the EC2 instances using Amis, and supplement with EBS snapshots for individual volume restore.

Answer: A

Explanation:

You need to use enterprise backup software to provide file level restore. See https://d0.awsstatic.com/whitepapers/Backup_and_Recovery_Approaches_Using_AWS.pdf Page 18:

If your existing backup software does not natively support the AWS cloud, you can use AWS storage gateway products. AWS Storage Gateway is a virtual appliance that provides seamless and secure integration between your data center and the AWS storage infrastructure.

NEW QUESTION 12

Can an EBS volume be attached to more than one EC2 instance at the same time?

- A. No

- B. Yes.

- C. Only EC2-optimized EBS volumes.

- D. Only in read mod

Answer: A

Explanation:

EBS is network attached storage that can only be attached to one instance at a time https://aws.amazon.com/ebs/getting-started/ https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/EBSVolumes.html

NEW QUESTION 13

You need to measure the performance of your EBS volumes as they seem to be under performing. You have come up with a measurement of 1,024 KB I/O but your colleague tells you that EBS volume performance is measured in IOPS. How many IOPS is equal to 1,024 KB I/O?

- A. 16

- B. 256

- C. 8

- D. 4

Answer: D

Explanation:

Several factors can affect the performance of Amazon EBS volumes, such as instance configuration, I/O characteristics, workload demand, and storage configuration. IOPS are input/output operations per second. Amazon EBS measures each I/O operation per second (that is 256 KB or smaller) as one IOPS. I/O operations that are larger than 256 KB are counted in 256 KB capacity units.

For example, a 1,024 KB I/O operation would count as 4 IOPS. When you provision a 4,000 IOPS volume and attach it to an EBS-optimized instance that can provide the necessary bandwidth, you can transfer up to 4,000 chunks of data per second (provided that the I/O does not exceed the 128 MB/s per volume throughput limit of General Purpose (SSD) and Provisioned IOPS (SSD) volumes).

NEW QUESTION 14

In Amazon EC2, partial instance-hours are billed ____.

- A. per second used in the hour

- B. per minute used

- C. by combining partial segments into full hours

- D. as full hours

Answer: D

Explanation:

Partial instance-hours are billed to the next hour. References:

NEW QUESTION 15

True or False: Without IAM, you cannot control the tasks a particular user or system can do and what AWS resources they might use.

- A. FALSE

- B. TRUE

Answer: B

Explanation:

http://docs.aws.amazon.com/IAM/latest/UserGuide/getting-setup.html

NEW QUESTION 16

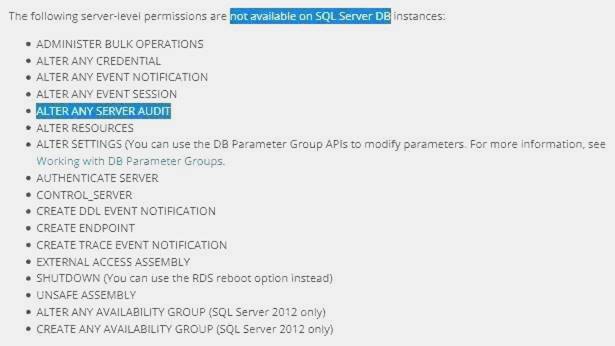

Is the SQL Server Audit feature supported in the Amazon RDS SQL Server engine?

- A. No

- B. Yes

Answer: A

Explanation:

http://docs.aws.amazon.com/AmazonRDS/latest/UserGuide/CHAP_SQLServer.html

NEW QUESTION 17

Without _____ , you must either create multiple AWS accounts-each with its own billing and subscriptions to AWS products-or your employees must share the security credentials of a single AWS account.

- A. Amazon RDS

- B. Amazon Glacier

- C. Amazon EMR

- D. Amazon IAM

Answer: D

NEW QUESTION 18

Your company has decided to set up a new AWS account for test and dev purposes. They already use AWS for production, but would like a new account dedicated for test and dev so as to not accidentally break the production environment. You launch an exact replica of your production environment using a CloudFormation template that your company uses in production. However CloudFormation fails. You use the exact same CloudFormation template in production, so the failure is something to do with your new AWS account. The CloudFormation template is trying to launch 60 new EC2 instances in a single AZ. After some research you discover that the problem is;

- A. For all new AWS accounts there is a soft limit of 20 EC2 instances per regio

- B. You should submit the limit increase form and retry the template after your limit has been increased.

- C. For all new AWS accounts there is a soft limit of 20 EC2 instances per availability zon

- D. You should submit the limit increase form and retry the template after your limit has been increased.

- E. You cannot launch more than 20 instances in your default VPC, instead reconfigure the CloudFormation template to provision the instances in a custom VPC.

- F. Your CloudFormation template is configured to use the parent account and not the new accoun

- G. Change the account number in the CloudFormation template and relaunch the template.

Answer: A

NEW QUESTION 19

A newspaper organization has an on-premises application, which allows the public to search its back catalogue and retrieve individual newspaper pages via a website written in Java They have scanned the old newspapers into JPEGs (approx 17TB) and used Optical Character Recognition (OCR) to populate a commercial search product. The hosting platform and software are now end of life and the organization wants to migrate Its archive to AWS and produce a cost efficient architecture and still be designed for availability and durability. Which is the most appropriate?

- A. Use S3 with reduced redundancy lo store and serve the scanned files, install the commercial search application on EC2 Instances and configure with auto-scaling and an Elastic Load Balancer.

- B. Model the environment using CloudFormation use an EC2 instance running Apache webserver and an open source search application, stripe multiple standard EBS volumes together to store the JPEGs and search index.

- C. Use S3 with standard redundancy to store and serve the scanned files, use CloudSearch for query processing, and use Elastic Beanstalk to host the website across multiple availability zones.

- D. Use a single-AZ RDS MySQL instance lo store the search index 33d the JPEG images use an EC2 instance to serve the website and translate user queries into SQL.

- E. Use a CloudFront download distribution to serve the JPEGs to the end users and Install the current commercial search product, along with a Java Container Tor the website on EC2 instances and use Route53 with DNS round-robin.

Answer: C

Explanation:

There is no such thing as "Most appropriate" without knowing all your goals. I find your scenarios very fuzzy, since you can obviously mix-n-match between them. I think you should decide by layers instead:

Load Balancer Layer: ELB or just DNS, or roll-your-own. (Using DNS+EIPs is slightly cheaper, but less reliable than ELB.)

Storage Layer for 17TB of Images: This is the perfect use case for S3. Off-load all the web requests directly to the relevant JPEGs in S3. Your EC2 boxes just generate links to them.

If your app already serves it's own images (not links to images), you might start with EFS. But more than likely, you can just setup a web server to re-write or re-direct all JPEG links to S3 pretty easily. If you use S3, don't serve directly from the bucket - Serve via a CNAME in domain you control. That way, you can switch in CloudFront easily.

EBS will be way more expensive, and you'll need 2x the drives if you need 2 boxes. Yuck. Consider a smaller storage format. For example, JPEG200 or WebP or other tools might make for smaller images. There is also the DejaVu format from a while back.

Cache Layer: Adding CloudFront in front of S3 will help people on the other side of the world -- well, possibly. Typical archives follow a power law. The long tail of requests means that most JPEGs won't be requested enough to be in the cache. So you are only speeding up the most popular objects. You can always wait, and switch in CF later after you know your costs better. (In some cases, it can actually lower costs.)

You can also put CloudFront in front of your app, since your archive search results should be fairly static. This will also allow you to run with a smaller instance type, since CF will handle much of the

load if you do it right. Database Layer: A few options:

Use whatever your current server does for now, and replace with something else down the road. Don't under-estimate this approach, sometimes it's better to start now and optimize later.

Use RDS to run MySQL/Postgres

I'm not as familiar with ElasticSearch / Cloudsearch, but obviously Cloudsearch will be less maintenance+setup.

App Layer:

When creating the app layer from scratch, consider CloudFormation and/or OpsWorks. It's extra stuff to learn, but helps down the road.

Java+Tomcat is right up the alley of ElasticBeanstalk. (Basically EC2 + Autoscale + ELB). Preventing Abuse: When you put something in a public S3 bucket, people will hot-link it from their web pages. If you want to prevent that, your app on the EC2 box can generate signed links to S3 that expire in a few hours. Now everyone will be forced to go thru the app, and the app can apply rate limiting, etc.

Saving money: If you don't mind having downtime:

run everything in one AZ (both DBs and EC2s). You can always add servers and AZs down the road, as long as it's architected to be stateless. In fact, you should use multiple regions if you want it to be

really robust.

use Reduced Redundancy in S3 to save a few hundred bucks per month (Someone will have to "go fix it" every time it breaks, including having an off-line copy to repair S3.)

Buy Reserved Instances on your EC2 boxes to make them cheaper. (Start with the RI market and buy a partially used one to get started.) It's just a coupon saying "if you run this type of box in this AZ, you will save on the per-hour costs." You can get 1/2 to 1/3 off easily.

Rewrite the application to use less memory and CPU - that way you can run on fewer/smaller boxes. (May or may not be worth the investment.)

If your app will be used very infrequently, you will save a lot of money by using Lambda. I'd be worried that it would be quite slow if you tried to run a Java application on it though.

We're missing some information like load, latency expectations from search, indexing speed, size of the search index, etc. But with what you've given us, I would go with S3 as the storage for the files (S3 rocks. It is really, really awesome). If you're stuck with the commercial search application, then on EC2 instances with autoscaling and an ELB. If you are allowed an alternative search engine, Elasticsearch is probably your best bet. I'd run it on EC2 instead of the AWS Elasticsearch service, as IMHO it's not ready yet. Don't autoscale Elasticsearch automatically though, it'll cause all sorts of issues. I have zero experience with CloudSearch so ic an't comment on that. Regardless of which option, I'd use CloudFormation for all of it.

NEW QUESTION 20

A news organization plans to migrate their 20 TB video archive to AWS. The files are rarely accessed, but when they are, a request is made in advance and a 3 to 5-hour retrieval time frame is acceptable. However, when there is a breaking news story, the editors require access to archived footage within minutes.

Which storage solution meets the needs of this organization while providing the LOWEST cost of storage?

- A. Store the archive in Amazon S3 Reduced Redundancy Storage.

- B. Store the archive in Amazon Glacier and use standard retrieval for all content.

- C. Store the archive in Amazon Glacier and pay the additional charge for expedited retrieval when needed.

- D. Store the archive in Amazon S3 with a lifecycle policy to move this to S3 Infrequent Access after 30 days.

Answer: B

NEW QUESTION 21

Which of the following services can receive an alert from CloudWatch?

- A. AWS Elastic Block Store

- B. AWS Relational Database Service

- C. AWS Auto Scaling

- D. AWS Elastic Load Balancing

Answer: C

Explanation:

AWS Auto Scaling and Simple Notification Service (SNS) work in conjunction with CloudWatch. CloudWatch can send alerts to the AS policy or to the SNS end points. http://docs.aws.amazon.com/AmazonCloudWatch/latest/DeveloperGuide/related_services.html

NEW QUESTION 22

Network ACLs are ______ .

- A. stateful

- B. stateless

- C. asynchronous

- D. synchronous

Answer: B

Explanation:

Network ACLs are stateless; responses to allowed inbound traffic are subject to the rules for outbound traffic (and vice versa). http://docs.aws.amazon.com/AmazonVPC/latest/UserGuide/VPC_ACLs.html

NEW QUESTION 23

By default, EBS volumes that are created and attached to an instance at launch are deleted when that instance is terminated. You can modify this behavior by changing the value of the flag to false when you launch the instance

- A. DeleteOnTermination

- B. RemoveOnDeletion

- C. RemoveOnTermination

- D. TerminateOnDeletion

Answer: A

Explanation:

By default, Amazon EBS root device volumes are automatically deleted when the instance terminates. However, by default, any additional EBS volumes that you attach at launch, or any EBS volumes that you attach to an existing instance persist even after the instance terminates.

This behavior is controlled by the volume’s DeleteOnTermination attribute, which you can modify. http://docs.aws.amazon.com/AWSEC2/latest/UserGuide/terminating-instances.html

NEW QUESTION 24

Disabling automated backups disable the point-in-time recovery.

- A. if configured to can

- B. will never

- C. will

Answer: C

NEW QUESTION 25

You are setting up some IAM user policies and have also become aware that some services support resource-based permissions, which let you attach policies to the service's resources instead of to IAM users or groups. Which of the below statements is true in regards to resource-level permissions?

- A. All services support resource-level permissions for all actions.

- B. Resource-level permissions are supported by Amazon CloudFront

- C. All services support resource-level permissions only for some actions.

- D. Some services support resource-level permissions only for some action

Answer: D

Explanation:

AWS Identity and Access Management is a web service that enables Amazon Web Services (AWS)

customers to manage users and user permissions in AWS. The service is targeted at organizations with multiple users or systems that use AWS products such as Amazon EC2, Amazon RDS, and the AWS Management Console. With IAM, you can centrally manage users, security credentials such as access keys, and permissions that control which AWS resources users can access. In addition to supporting IAM user policies, some services support resource-based permissions, which let you attach policies to the service's resources instead of to IAM users or groups. Resource-based permissions are supported by Amazon S3, Amazon SNS, and Amazon SQS. The resource-level permissions service supports IAM policies in which you can specify individual resources using

Amazon Resource Names (ARNs) in the policy's Resource element. Some services support resourcelevel permissions only for some actions.

NEW QUESTION 26

Is it possible to publish your own metrics to CloudWatch?

- A. Yes, but only if the data is aggregated.

- B. No, it is not possible.

- C. No, metrics are in-built and cannot be defined explicitly.

- D. Yes, it can be done by using the put-metric-data comman

Answer: D

Explanation:

You can publish your own metrics to CloudWatch using the AWS CLI or an API. You can view statistical graphs of your published metrics with the AWS Management Console. CloudWatch stores data about

a metric as a series of data points. Each data point has an associated time stamp. You can even publish an aggregated set of data points called a statistic set. http://docs.aws.amazon.com/AmazonCloudWatch/latest/DeveloperGuide/publishingMetrics.html

NEW QUESTION 27

Which of the following are true regarding encrypted Amazon Elastic Block Store (EBS) volumes? (Choose two.)

- A. Supported on all Amazon EBS volume types

- B. Snapshots are automatically encrypted

- C. Available to all instance types

- D. Existing volumes can be encrypted

- E. shared volumes can be encrypted

Answer: AB

Explanation:

This feature is supported on all Amazon EBS volume types (General Purpose (SSD), Provisioned IOPS (SSD), and Magnetic). You can access encrypted Amazon EBS volumes the same way you access existing volumes; encryption and decryption are handled transparently and they require no

additional action from you, your Amazon EC2 instance, or your application. Snapshots of encrypted

Amazon EBS volumes are automatically encrypted, and volumes that are created from encrypted Amazon EBS snapshots are also automatically encrypted.

NEW QUESTION 28

What does Amazon Elastic Beanstalk provide?

- A. A scalable storage appliance on top of Amazon Web Services.

- B. An application container on top of Amazon Web Services.

- C. A service by this name doesn't exist.

- D. A scalable cluster of EC2 instance

Answer: B

NEW QUESTION 29

Which of the following statements is true of an Auto Scaling group?

- A. An Auto Scaling group cannot span multiple regions.

- B. An Auto Scaling group delivers log files within 30 minutes of an API call.

- C. Auto Scaling publishes new log files about every 15 minutes.

- D. An Auto Scaling group cannot be configured to scale automaticall

Answer: A

Explanation:

An Auto Scaling group can contain EC2 instances that come from one or more Availability Zones within the same region. However, an Auto Scaling group cannot span multiple regions. http://docs.aws.amazon.com/AutoScaling/latest/DeveloperGuide/US_AddAvailabilityZone.html

NEW QUESTION 30

You can create a CloudWatch alarm that watches a single metric. The alarm performs one or more actions based on the value of the metric relative to a threshold over a number of time periods. Which of the following states is possible for the CloudWatch alarm?

- A. ERROR

- B. THRESHOLD

- C. ALERT

- D. OK

Answer: D

Explanation:

You can create a CloudWatch alarm that watches a single metric. The alarm performs one or more actions based on the value of the metric relative to a threshold over a number of time periods. The action can be an Amazon EC2 action, an Auto Scaling action, or a notification sent to an Amazon SNS topic.

An alarm has three possible states:

OK--The metric is within the defined threshold ALARM--The metric is outside of the defined threshold

INSUFFICIENT_DATA--The alarm has just started, the metric is not available, or not enough data is available for the metric to determine the alarm state http://docs.aws.amazon.com/AmazonCloudWatch/latest/DeveoperGuide/AlarmThatSendsEmail.ht ml

NEW QUESTION 31

......

P.S. Dumpscollection now are offering 100% pass ensure SAA-C01 dumps! All SAA-C01 exam questions have been updated with correct answers: http://www.dumpscollection.net/dumps/SAA-C01/ (288 New Questions)